Jon Allspaw (@allspaw) from Etsy talked about the role that Anomaly Detection, Fault Tolerance and Anticipation play in producing highly scalable software systems (Fault tolerance, anomaly detection, and anticipation patterns, slides [PDF, 5MB]).

As head of technical operations at Etsy, whose web traffic is pretty substantial, Jon focused on resilience in software systems: what it is, and how to achieve it.

Teams for Resilience

Jon started by characterizing a sequence of states which engineering teams can adopt (alongside the corresponding systems) in order to improve resilience:

- Anticipation

- Monitoring

- Response

- Learning

Jon identified feedback loops between every activity here, implying rapid and effective inter-team communication; outsourcing a team (such as Operations) to an unresponsive third-party is not an option here.

Monitoring Types

We then looked at different kinds of monitoring, as being able to detect variance and failure is the first step towards resilience:

Supervisory Monitoring

- Active health check: Question: “Are you okay” Answer: “Yes“; Question: “Are you okay” Answer: “Yes“, etc.

- Easy to understand, but prone to messaging failures: if responses are lost, the monitor can trigger an alert unnecessarily.

- Passive health check: simply read data which has enough context already: “System x was okay at HH:MM:SS”

- Simple and more scalable than Active health check, but not so applicable to networked systems (where the data may not be readily available).

- Passive in-line logging:“Not hearing bad news is good news”

- On-demand publish

- The onus is on the application to publish information in a timely fashion

- The history (trends) of the data is crucial: positive vs. negative data mean different things.

In-Line Monitoring

To address a gap in the software space for passive in-line logging, Etsy wrote the statsd library for auto-calculating mean, 90th percentile, etc., as numeric data is logged via a “fire-and-forget” UDP API.

What is abnormal?

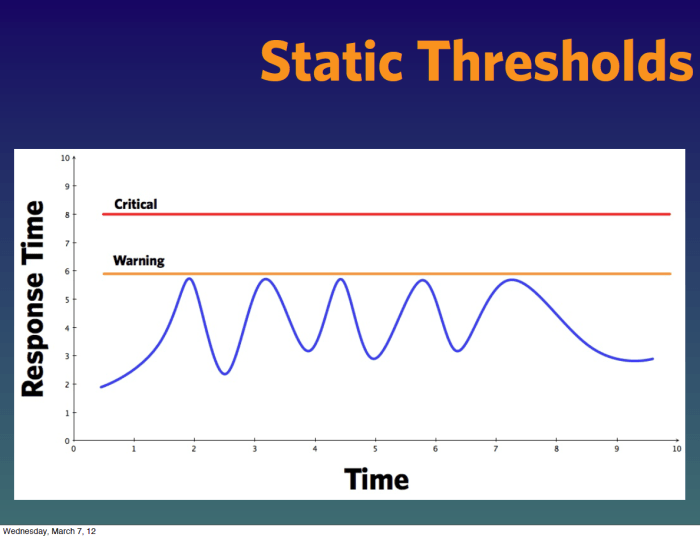

One of the most revealing parts of the session was Jon’s look at “normal” and “abnormal” behavior. In particular, many monitoring systems (Zabbix, Nagios, etc.) have static thresholds for alarms and alerts, but in the real world, thresholds change over time and for different times of day.

For me, this slide summed up the problem of static thresholds – we can see that a system has a large variance in response, but we likely receive no alerts in this condition, as we happen not to have triggered what is a fairly arbitrary Warning threshold:

There is also the concept of “normal but noisy”; there can be large spikes in performance/demand/requests, but that can be normal. Peaks and troughs can be huge over days, weeks, months etc., so the thresholds need to be dynamic.

Jon covered the use of the Holt-Winters exponential smoothing algorithm for predicting time series and confidences (upper and lower bounds); essentially, the algorithm weights more recent data more highly than older data. Etsy has written patches for Graphite and Nagios to allow use of a moving alarm/alert threshold determined by the Holt-Winters algorithm [PDF], and this has allowed them to respond much more dynamically to real-world traffic events.

Adaptive Systems and Fault Tolerance

We then covered adaptive systems and the nature of fault tolerance. The first question to ask when dealing designing for resilience is:

Do we actually want to tolerate this particular failure? Or should we mask it? Or recover from it?

In other words, ask what do we NOT care about? This allows you to focus on failures which are worth planning and adapting for.

Jon used the example of the shock absorber as a mechanical compensator (or damper in Control Engineering terms). We should be looking to build systems where each part can absorb a degree of “shock”:

http://www.flickr.com/photos/iguanajo/54010162/sizes/m/in/photostream/

Load testing and stress testing are clearly useful for establishing the working limits and tolerances of system components, although the most awkward (and destructive) failure scenarios are also the most difficult to predict: accurate and timely monitoring, with both computers and humans watching the trends, is essential to trap error conditions before they lead to destructive failures (see Fault Tolerance Modes below).

Functional Resonance

In particular, Functional Resonance can have a destructive effect on systems composed of distinct subsystems. Functional Resonance is the unexpected frequency-domain coupling between connected systems, leading to unexpected and apparently unpredictable “cascading” behaviour (such as the disk mirroring storm which led to the AWS outage in 2011); it is the result of the aggregate of individual subsystem tolerances.

Jon did not elaborate on how cascading functional resonance situations can be avoided. A recent control-systems approach to avoiding functional resonance is Functional Resonance Analysis Method (FRAM) which has been used to analyse air traffic accidents to better improve the communication within organizations to help make systems better able to adapt – see http://www.resilience-engineering.org/ and the Books on System Resilience section below for more details.

Fault Tolerance Modes

We looked at different strategies for dealing with failure in use at Etsy:

- In-line fault tolerance:

- Each web server has its own perspective on availability of machines it needs to connect to

- Each machine caches the results of connection attempts, and tries the next server if the first is unavailable

- The benefits are that a SPOF (typically the load-balancer) is removed and the system becomes “self-healing” to an extent

- “Fail Closed”:

- Close off a feature upon detection of failure

- Remove the feature from production

- Monitors to check downstream dependencies of things it’s monitoring, so you have higher confidence in the initial check e.g. check the database behind a search index server

- Can get too crazy – you can end up removing too many things from Production if you’re too aggressive with the lock-down algorithm

- “Fail Open”:

- Mask the error and continue

- For example, at Etsy, if Geo-IP targeting does not return within 50ms, then they just do not show the results for geo-located results (graceful degradation)

- Rate Limiting: if internal checks and calculations take too long to complete, then ignore them and let the user continue with their purchase. Ping off a UDP metric “this failed”.

- Value the user experience over internal metrics

Terminology for Faults

Jon was keen to clarify several terms relating to fault tolerance:

- Do not confuse variation with faults. A Fault is an unexpected variation which cannot be adapted to or masked.

- Complicated vs. Complex. A car is complicated (but ultimately understandable in its entirety); traffic flow is complex (and not understandable or predictable in its entirety).

Team Strategies for Resilience

In my experience, the importance of the right team organization and team interactions is often hugely underestimated by organizations building software systems. It was therefore good to see Jon conclude his talk by emphasizing ways in which team activities and team organization can help build a resilient system. Jon recommended the following:

- Encourage the “anticipatory” skills in developers etc.

- Ask people “What can go wrong?”

- Respect and act on the answers

- Run open architectural reviews – bring in product team, support, IS, Ops

- Everyone has a no-go vote at “Go/No-Go” meetings

- Play “Game Day” exercises

- How much damage could we inflict on the system?

- What would happen if we [pulled this cable]?

- Drill (rehearse) for failures

Software developers, testers and ops people usually have a very good idea of what is likely to break, so making use of this knowledge is crucial when looking for system resiliency; a key part of surfacing that knowledge is to provide such people with real ownership of system designs so they can see their concerns have a positive impact.

http://www.flickr.com/photos/louish/5611657857/sizes/m/in/photostream/

Summary

Much of what Jon shared feels like common sense, but it’s surprising how many organizations building software systems follow so few of these common sense recommendations, and so end up with flaky, unreliable systems.

Jon’s closing remark summed up the talk nicely:

“It’s all going to break, because we don’t know what we’re doing as humans”

We therefore need to make systems safe for WHEN they fail.

Books on System Resilience

Many of these concepts are covered in recent books such as: