LondonCD meetup group on 12 June 2017. The other posts are linked at the end of this article.

Continuous Delivery for web applications is (in 2017) largely a solved problem but where data and databases are concerned, Continuous Delivery becomes more difficult (I have written quite a bit about Continuous Delivery and Databases on the Redgate Simple Talk website – worth a read if you’re interested). In the meetup, we explored some of these challenges and some solutions to Continuous Delivery for databases. (Thanks to Alex Yates of DLM Consultants for his expertise in facilitating the discussion!)

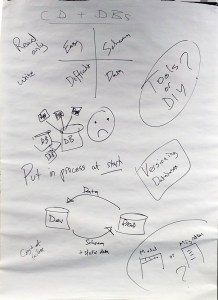

- Schema-only changes or situations where the data is read-only are fairly easy to deal with.

- Dealing with data changes and read-write access to databases is more difficult.

- Using tools can speed up and simplify the management of scripted DB changes, but choose carefully because you might get vendor lock-in.

- Databases with many applications or services connected are especially painful to deal with due to the high coupling.

- It’s useful to get database schemas* and reference/static data into version control, preferably at the start of a new database lifecycle.

- There are two main approaches to automated database version changes, but these are not very compatible – for a particular database you need to choose just one:

- Migrations (sequential scripts)

- Model (generate and apply a diff)

In my experience, many of the problems associated with tackling Continuous Delivery for databases can be solved by tackling some basic database housekeeping like data archiving, good naming, separate BI systems, matching Production and non-Production technology, etc. In many cases I have seen, databases that were (sometimes proudly!) described as “tens of TeraBytes in size” actually needed only a few hundred GigaBytes of data for most live transactions; addressing this bloat is a key enabler for Continuous Delivery.

* ( ‘schemata’, although correct, seems a bit pedantic, even for a Greek-speaker like me 🙂 )

On 12 June 2017 we had a London Continuous Delivery meetup at Endava in London. We used a modified form of the Open Spaces format for the meetup with less initial open discussion and more guidance/suggestions on discussion topics (based on past experience with events like PIPELINE Conference, this “accelerated” approach is easier for people new to Open Spaces).

Some good discussions came out of the evening:

- Continuous Security in Continuous Delivery

- Difficulties and Solutions for Continuous Delivery of Databases

- Continuous Delivery for Legacy/Heritage Systems

- Things I Do Not Like About Continuous Delivery

Thanks to everyone who attended, and for Endava for hosting!